Create an API Key

- In your organization’s DeepRails API Console, go to API Keys.

- Click Create key, name it, then copy the key.

- (Optional) Save it as the

DEEPRAILS_API_KEYenvironment variable.

Install the SDK

- Python

- TypeScript / Node

- Ruby

- Go

Create your first Defend workflow

Before you can submit events to be evaluated and potentially remediated, you have to create and configure a workflow.A workflow is an abstraction for a specific production use of Gen AI, and its configurations determine which guardrail metrics are evaluated, at what thresholds, and how issues are remediated.

Types of Workflows

Workflows can either have custom or automatic thresholds, set by thethreshold_type. Automatic workflows have adaptive thresholds that change as events are recorded, starting at low, medium, or high values in an automatic_hallucination_tolerance_levels dictionary. Custom workflows have static, fully customizable thresholds for each selected metric set as floating point values in a custom_hallucination_threshold_values dictionary.Note that workflows set to automatic must have

automatic_hallucination_tolerance_levels specified and custom_hallucination_threshold_values is not needed, and vice versa for those set to custom.

- Python

- TypeScript / Node

- Ruby

- Go

Required Parameters

| Field | Type | Description |

|---|---|---|

name | string | The name of the workflow. |

threshold_type | string | The workflow type (either automatic or custom), which determines whether thresholds are specified by the user or set automatically. |

improvement_action | string | The remediation strategy when outputs fail guardrail metrics. fixit rewrites the failing output to pass the metrics. regen prompts the LLM to regenerate the output from scratch. do_nothing records the failure without attempting remediation. |

Optional Parameters

| Field | Type | Description |

|---|---|---|

description | string | A description of the use case of the workflow or other additional information. |

custom_hallucination_threshold_values | object | The mapping of guardrail metrics to floating point threshold values (0.0-1.0). Required when threshold_type is custom. This determines which metrics Defend will evaluate and how strict each threshold is. |

automatic_hallucination_tolerance_levels | object | The mapping of guardrail metrics to tolerance levels (low, medium, or high). Required when threshold_type is automatic. This determines which metrics Defend will evaluate and how strict the adaptive thresholds start. |

max_improvement_attempts | integer | The maximum number of improvement attempts to be applied to one workflow event before it is considered failed. Defaults to 10. |

web_search | boolean | Whether or not the extended AI capability, web search, is available to the evaluation and remediation models. |

file_search | string[] | A list of uploaded file IDs for the evaluation and remediation models to search using the extended AI capability, file search. Upload files first via /files/upload. |

context_awareness | boolean | Whether or not the extended AI capability, context awareness, is available to the evaluation and remediation models. |

Submit a Workflow Event

Use the SDK to log a production event (input + output). This creates a workflow event and automatically triggers an associated evaluation using the guardrail metrics you pass.If the evaluation fails for one or more metrics, the improvement action specified for the affiliated workflow will be used to remediate the output. Then, that improved output will be evaluated and potentially improved again, if needed.

The improvement process will repeat for that event until all guardrails pass or the maximum number of retries is reached.

Tip: You can also submit a workflow event via the DeepRails API Playground.

- Python

- TypeScript / Node

- Ruby

- Go

Required Parameters

| Field | Type | Description |

|---|---|---|

workflow_id | string | The ID of the Defend workflow associated with this event. (find it in Console → Defend → Manage Workflows) |

model_input | object | Your prompt + optional context. Must include at least a user_prompt. See model_input fields below. |

model_output | string | The LLM output to be evaluated and recorded with the event. |

model_used | string | The model used to generate the output, like gpt-4o or o3. |

run_mode | string | Run mode for the workflow event that determines which models are used to evaluate the event. Available run modes (fastest to most thorough): super_fast, fast, precision, precision_codex, precision_max, and precision_max_codex. Defaults to fast. Note: super_fast does not support Web Search or File Search — if your workflow has these enabled, use a different run mode or edit the workflow to disable them. |

model_input Fields

| Field | Type | Required | Description |

|---|---|---|---|

user_prompt | string | Yes | The user prompt sent to the LLM. |

system_prompt | string | No | The system prompt used to configure the LLM’s behavior. |

ground_truth | string | No | The expected correct answer. Required when the workflow evaluates the ground_truth_adherence metric. |

context | array | No | Structured context for the evaluation, such as conversation history or domain-specific facts. Each item should have role and content fields. Required when context_awareness is enabled on the workflow. |

Optional Parameters

| Field | Type | Description |

|---|---|---|

nametag | string | A user-defined tag for the event. |

Retrieve Workflow and Event Details

You can retrieve workflow details and fetch events from a specific workflow. Events are processed asynchronously, so you will need to poll using the event ID until the status isCompleted.

- Python

- TypeScript / Node

- Ruby

- Go

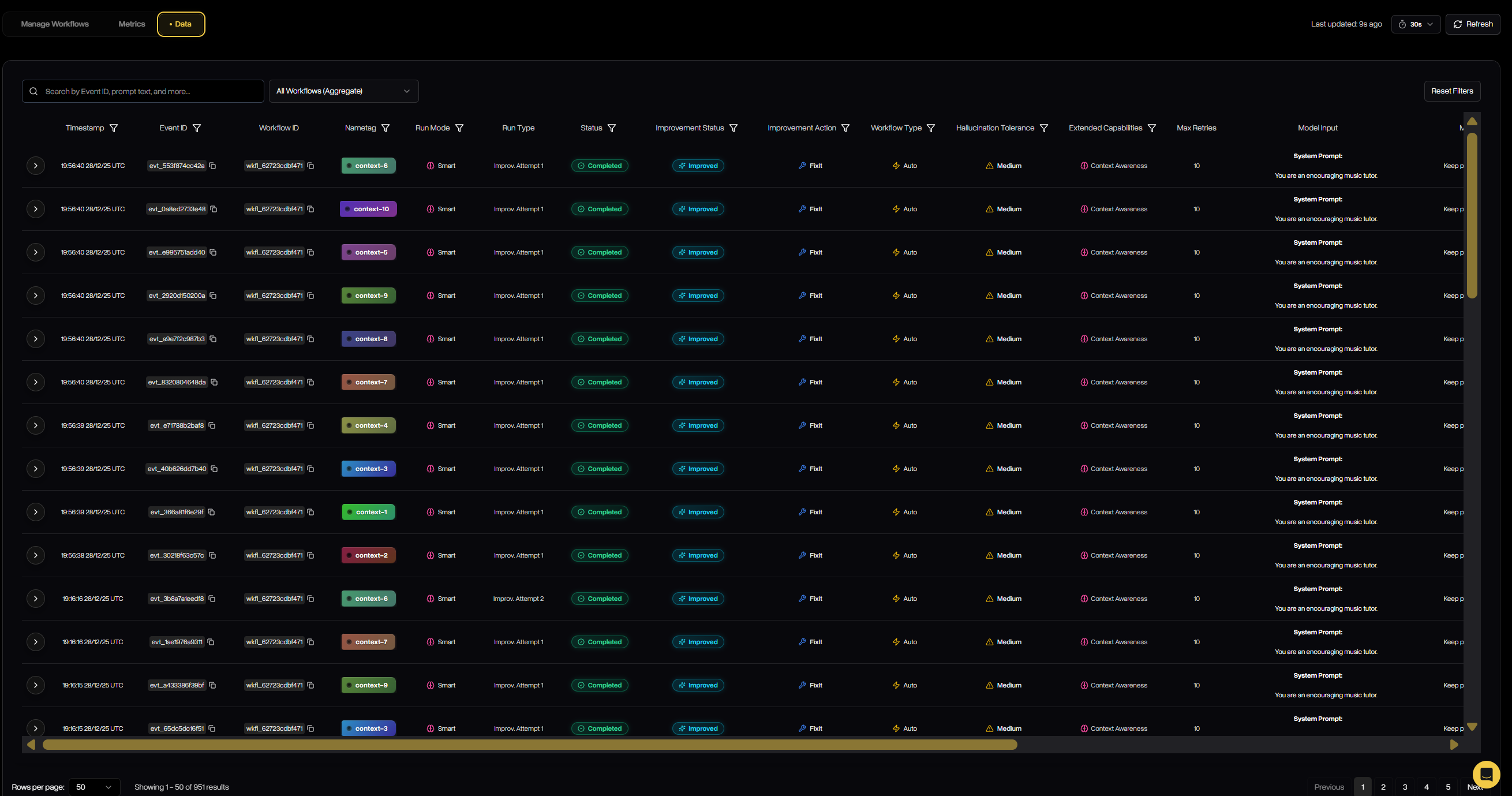

Check Defend Outcomes via the API Console

- Open DeepRails API Console → Defend → Data.

- Filter by time range or search by

workflow_idornametagto find events. - Open any event to see guardrail scores and remediation chains (FixIt/ReGen).

Next Steps

Configure Guardrails

Explore the metrics behind evaluations—correctness, safety, completeness, and more.

Defend Overview

Learn how workflows, metrics, and remediation work together.